The OCTOPUS Framework for AI Agents

By Zvi Schreiber · April 2026 · 12 min read

The Agent Configuration Problem

Something strange is happening in organizations everywhere. Companies are deploying AI agents — not chatbots, not copilots, but autonomous agents that own real work. An AI agent triages support tickets. Another reconciles invoices. A third screens job applicants and schedules interviews. These aren’t demos or experiments. They’re doing the work.

But ask the people deploying these agents how they configured them, and you’ll hear something revealing: they’re making it up as they go. There is no standard model — no shared vocabulary, no framework — for how to set up an AI agent that works as a member of your team.

In the previous paper in this series, I argued that AI enables a new organizational model — the octopus — where unified intelligence is distributed throughout the organization, each arm semi-autonomous but coordinated. But that raises an immediate practical question: how do you configure those arms?

Most teams today fall into one of three traps.

The prompt-and-pray approach. Give the agent a system prompt, connect it to an API, and hope for the best. This works for demos. In production, it produces agents with no memory, no governance, and no accountability. When the agent makes a mistake — and it will — there’s no way to understand why or prevent it from happening again.

The RPA trap. Script every action the agent can take, in rigid sequences. This is the spider approach applied to AI: centralized control, no autonomy, and brittle processes that break whenever the world changes. You’ve built an expensive macro, not a team member.

The platform-specific silo. Use a vendor’s agent builder, locked into their ecosystem. Your CRM vendor’s AI agent can work with CRM data. Your accounting vendor’s AI agent can work with accounting data. But they can’t work together, can’t share context, and each has its own (if any) governance model.

What’s missing is a comprehensive framework — a mental model for the decisions you face whenever you give an AI agent a job in your organization. Not a product specification, but a way of thinking about agent configuration that works regardless of which tools you use.

That’s what OCTOPUS provides.

Seven Dimensions of Agent Configuration

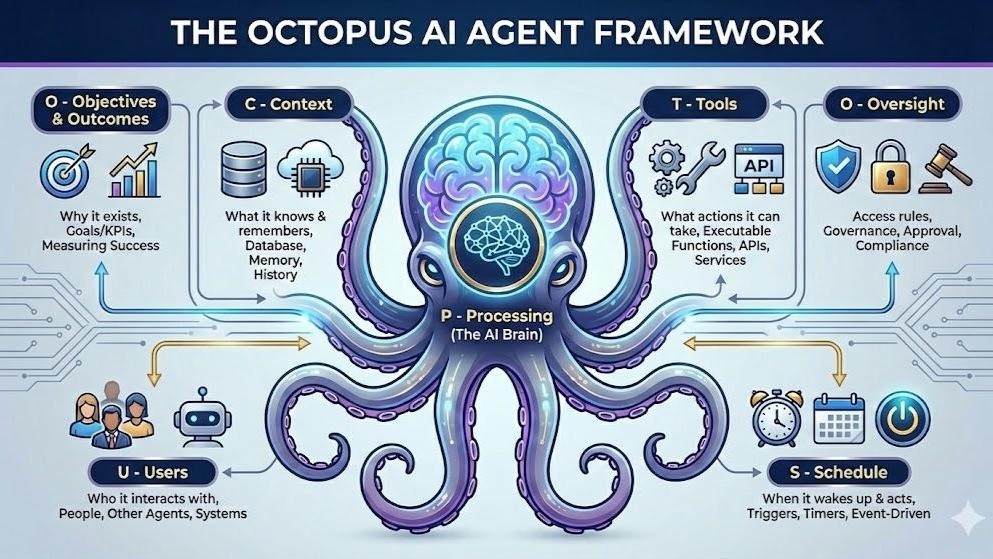

OCTOPUS is a framework with seven components, each representing one dimension of how an AI agent is configured to work within a team. The acronym isn’t accidental — it extends the octopus metaphor from organizational design down to the individual agent level. Each arm of the octopus has its own neural cluster, its own capacity for semi-independent action. These seven components are that neural cluster.

The framework is designed for progressive autonomy. You can start conservative — limited access, full human oversight, narrow scope — and expand each dimension independently as trust builds. An agent might begin with read-only access to one module, escalating every decision to a human. Months later, the same agent might operate across multiple modules with broad autonomy, only escalating novel situations. OCTOPUS provides the knobs to make that journey explicit and controlled.

O — Objectives and Outcomes

Every agent needs a reason to exist. This sounds obvious, but most agent deployments skip it. They define tasks (“process invoices”) without defining purpose (“ensure our vendors are paid accurately and on time, flagging anomalies and optimizing payment timing for cash flow”). The difference matters. A task-driven agent follows instructions. A purpose-driven agent makes judgment calls.

Objectives are the agent’s answer to “why do I exist?” They include a purpose statement, success criteria, and measurable key performance indicators. An agent responsible for support triage might have an objective like “resolve routine customer issues quickly and accurately, escalating complex cases to the right human with full context.” Its KPIs might include resolution time, customer satisfaction scores, and escalation accuracy.

This isn’t just documentation — it’s operational. When an agent faces an ambiguous situation, its objectives guide the decision. Should it spend more time researching a customer issue, or resolve quickly and move on? The answer depends on whether its objective emphasizes thoroughness or speed. Without explicit objectives, the agent defaults to whatever its training data suggests, which may or may not match what your business needs.

Objectives also enable something remarkable: progressive autonomy based on performance. If an agent consistently meets its accuracy and trust metrics, its autonomy can expand. If it stumbles, autonomy contracts. This is how human organizations work — you give a new hire limited authority and expand it as they prove themselves. OCTOPUS makes the same pattern explicit and measurable for agents.

C — Context

Context is the agent’s memory — what it knows, what it remembers, and who it is.

Most AI agents today have no persistent memory. Every conversation starts from zero. This is like hiring an employee who forgets everything at the end of each workday. They might be brilliant in the moment, but they can never build expertise, learn your preferences, or develop institutional knowledge.

OCTOPUS structures agent context into three layers:

The Briefing is the agent’s identity document — its role description, domain expertise, personality, communication style, and the background knowledge it needs for every interaction. Think of it as the employee handbook combined with the job description. A support agent’s briefing might include product documentation, common issue patterns, tone guidelines, and escalation criteria. The briefing is always loaded — it’s the foundation of every interaction the agent has.

Briefings aren’t static. They can be collaboratively maintained and versioned. Different team members can contribute sections — the product team maintains the product knowledge section, the support lead maintains the escalation criteria. Changes can require approval before they take effect, preventing accidental modifications to an agent’s core identity.

Knowledge Notes are the agent’s growing expertise. Unlike the briefing, which is curated and stable, knowledge notes are living documents that accumulate over time. After handling a tricky customer situation, the agent might create a note: “Customer X has a custom integration that causes error Y — apply workaround Z.” These notes persist across conversations, allowing the agent to build genuine institutional knowledge.

Knowledge notes also have a lifecycle. They can be flagged, updated, merged, or archived as they age or as the information becomes outdated. This prevents the common problem of AI memory becoming a graveyard of stale information.

The Conversation Journal is automatic. After each significant interaction, the agent reflects on what happened and records key takeaways. Over time, the journal becomes a searchable record of the agent’s experience — what it’s encountered, what worked, what didn’t. New conversations can draw on this history, giving the agent something that approximates genuine learning.

The three layers work together. The briefing provides stable identity. Knowledge notes provide accumulated expertise. The journal provides experiential memory. Together, they mean an agent that’s been running for six months is meaningfully more effective than one deployed yesterday — not because the underlying AI model improved, but because the agent has built context about your specific business.

T — Tools

Tools define what an agent can actually do. This is where the rubber meets the road — an agent might have brilliant objectives and deep context, but without access to the right tools, it can’t act.

OCTOPUS is built on a principle we call human parity: agents should access the same business capabilities that humans do. Not a simplified API, not a special “AI-friendly” subset, but the actual CRM, accounting system, task board, email, and file storage that the human team uses.

This is a deliberate design choice. When you give an agent a dumbed-down interface, you limit its ability to do real work. But more importantly, you create a parallel permission system — one for humans, one for agents — that’s expensive to maintain and inevitably diverges. Human parity means agents use the same permission infrastructure as humans. If your sales manager can see deals but not salary data, their sales agent inherits the same boundaries.

Tool access is granular and explicit. Each agent receives specific grants — which modules it can access, which operations it can perform, what data it can read and write. These aren’t inherited by default; they’re deliberately configured. You don’t accidentally give your support agent access to your financial data.

Beyond internal modules, agents can be granted access to communication tools (email, messaging), web capabilities (search, fetch, browse), and connected services (Google Workspace, Microsoft 365). Each of these has its own permission layer. An agent might be allowed to draft emails but not send them without approval. It might be able to search the web for market research but not navigate to arbitrary URLs. The principle is explicit, intentional access — not implicit access to everything.

Agents can also be granted the ability to spawn sub-agents for specific tasks. A project management agent might spin up a research agent to investigate a technical question, giving it scoped access for the duration of the task. When the sub-agent completes its work, the access expires. This models how human managers delegate — you give someone a specific task with specific authority, not permanent broad access.

O — Oversight

If Objectives answer “why does this agent exist?” and Tools answer “what can it do?”, Oversight answers the most important question of all: “who’s watching?”

This is the dimension that separates responsible AI deployment from reckless experimentation. And it’s the one most current agent platforms handle poorly — offering either no oversight (the agent does whatever it wants) or total lockdown (every action requires human approval, making the agent useless).

OCTOPUS models oversight as a spectrum with four tiers of trust:

Sandbox is training wheels. The agent can reason, plan, and propose actions — but nothing actually executes. It’s a dry run. This is where every new agent should start, and it’s invaluable for building confidence that the agent understands its role before giving it real authority.

Standard is the productive middle ground. The agent handles routine operations autonomously but escalates when it’s uncertain. The key insight here is that the agent itself assesses its own certainty — it doesn’t need a human to decide which actions need review, because the agent knows when it’s in familiar territory and when it’s not. A support agent that has seen a thousand password resets can handle them autonomously. When it encounters a novel billing dispute, it escalates — not because a rule told it to, but because it recognizes uncertainty.

Productive is earned autonomy. The agent operates independently within defined bounds, escalating only for truly exceptional situations. This is for agents that have demonstrated consistent performance over time.

Unrestricted is full autonomy — the agent acts without oversight. This is rare and reserved for agents doing low-risk, well-understood work where the cost of occasional mistakes is low relative to the cost of human review.

Beyond these tiers, oversight includes approval rules — specific overrides that require human sign-off regardless of trust level. An agent at the Productive tier might still require approval for actions above a dollar threshold, for external communications, or for changes to certain sensitive data. These rules are the guardrails that let you grant broad autonomy while maintaining control over the actions that matter most.

Approvers can do more than just approve or reject. They can edit the agent’s proposed action — fixing a small error without blocking the entire workflow — or reject with feedback that helps the agent improve. This creates a feedback loop: the agent learns not just what to do, but what the organization considers acceptable.

P — Processing

Processing is the agent’s brain configuration — which AI model it uses, how much it’s allowed to think, and how it constructs its understanding of each situation.

The practical insight here is that not every task requires the most powerful — and most expensive — AI model. A simple data lookup or status update can use a fast, lightweight model. Complex reasoning, nuanced customer interactions, or financial analysis might require a frontier model. Processing configuration routes different types of work to different models, optimizing the cost-performance tradeoff automatically.

Token budgets are another practical control. Without limits, an agent can spiral into expensive deliberation on a simple task — the AI equivalent of overthinking. Processing configuration sets boundaries: this type of task gets a budget of X tokens, which forces the agent to be concise and efficient. Budgets scale with task complexity, so the agent has room to think when the problem warrants it.

The agent’s system prompt — its core instructions — is assembled modularly from reusable components: safety guidelines, identity, personality, tone, domain expertise, tool instructions. This modularity means you can adjust one aspect of the agent’s behavior (say, making it more formal in customer communications) without rewriting everything. Different conversation types can assemble different prompt configurations, so the agent adapts its approach to the situation.

This is a less glamorous dimension of agent configuration, but it’s where cost control and practical performance live. An organization running ten agents can easily spend thousands of dollars a month on AI inference. Processing configuration is the thermostat.

U — Users

Users defines the agent’s relationships — who it works with, who it reports to, and how it communicates.

This is where agents stop being tools and start being team members. An agent doesn’t just have capabilities; it has a manager who reviews its work, collaborators who depend on its output, and humans it can escalate to when situations exceed its authority.

OCTOPUS defines several relationship types: manager, collaborator, supervisor, delegate, peer. These aren’t just labels — they shape the agent’s behavior. An agent treats its manager differently than a peer. It provides more detail to a supervisor. It gives broader autonomy to a delegate. The relationships are directional: “Alice manages the support agent” and “the support agent reports to Alice” are two sides of the same relationship, each influencing behavior differently.

Critically, relationships are separate from data access permissions. An agent’s manager can review its work and adjust its configuration, but that doesn’t automatically mean the manager can see all the data the agent has access to. A recruiter’s agent might process sensitive candidate information that even the agent’s supervisor shouldn’t see directly. This separation prevents the common anti-pattern where administrative access to an AI system becomes a backdoor to all the data it processes.

Agents can also have relationships with other agents, enabling delegation chains and multi-agent workflows. A project management agent might delegate research to a specialist agent, review the results, and synthesize them — mirroring how human teams operate.

Perhaps the most distinctive feature is acting-as: a human can step into an agent’s perspective, seeing the system as the agent sees it. This is invaluable for debugging (“why did the agent make that decision?”), for quality review (“let me see the agent’s inbox”), and for seamless handoff (“I’ll take over this customer interaction from where the agent left off”). It’s like being able to sit in someone’s chair and see their screen — but for AI agents.

S — Schedule and Automations

The final dimension answers: when does the agent act?

Most AI agents today are reactive — they wait for a human to invoke them. Schedule and Automations makes agents proactive. They can work on their own cadence, triggered by time or by events, without a human pressing a button.

Time-based schedules are straightforward: run a daily report at 7 AM, audit the week’s transactions every Friday, reconcile accounts on the last day of each month. These are the routines that every organization has and that are always the first things to slip when humans get busy.

Event-driven automations are more powerful. “When a new support ticket arrives, classify it and route it to the right team.” “When a deal closes, generate an invoice, update the forecast, and create onboarding tasks.” “When an employee submits a time-off request, check team coverage and approve if adequate.” These automations fire in response to real business events, giving agents the ability to respond to the world without waiting for instructions.

Automations include safeguards: throttling prevents an agent from being overwhelmed by a burst of events, cooldown periods prevent duplicate actions, and filters ensure the agent only responds to relevant triggers. An agent monitoring support tickets might only activate for tickets in a specific category or above a certain priority level.

The shift from reactive to proactive is what transforms an agent from an assistant you have to remember to ask into a colleague who takes initiative. A proactive agent doesn’t wait to be told the monthly report is due — it produces it. It doesn’t wait for someone to notice that a customer’s satisfaction score has dropped — it flags it. It doesn’t wait for the morning meeting to surface that yesterday’s shipment was delayed — it’s already adjusting the timeline.

Why a Unified Framework

These seven dimensions are interdependent. An agent with great tools but no oversight is dangerous. An agent with oversight but no context is useless. An agent with objectives but no schedule is passive. Each dimension makes the others more effective.

This interdependence is why piecemeal approaches fail. If you bolt an “AI assistant” onto your CRM with its own prompt, its own permission model, and its own usage tracking — and then do the same for your accounting tool and your support tool — you end up managing three separate, inconsistent agent configurations. Each has different capabilities, different governance, and different blind spots. You’ve created the SaaS fragmentation problem inside your AI layer.

OCTOPUS provides a single framework for every agent in the organization. The support agent and the finance agent are configured using the same seven dimensions, even though their specific settings are entirely different. This consistency means you can reason about your AI workforce as a whole — comparing trust levels, reviewing oversight configurations, understanding the total scope of agent access across your business.

The framework also enables something more subtle: progressive deployment as a deliberate strategy, not an accident. You can start any agent in sandbox mode with narrow tools, full oversight, and a basic briefing. As the agent proves itself — measured against its objectives, reviewed through its journal, audited through its oversight logs — you expand each dimension independently. More tools. Broader context. Higher trust tier. More automation triggers. The journey from cautious experiment to trusted team member is explicit and controlled.

This is how you build trust in AI agents. Not by hoping they work, but by giving them a structure that makes their capabilities, limitations, and governance transparent to everyone in the organization.

The Bigger Picture

OCTOPUS is a framework for individual agent configuration, but it implies something larger about the kind of system agents need to live in.

An agent configured with OCTOPUS needs access to real business data — not through APIs and integrations, but natively, as part of the same system where humans work. It needs a permission model it shares with humans, so tool access and oversight aren’t a separate governance layer. It needs to communicate with humans and other agents through shared channels. It needs event-driven triggers that fire from real business events, not webhook subscriptions to external systems.

In other words, the agent needs to live inside the business platform — not beside it. The OCTOPUS framework describes the neural cluster in each arm of the octopus. But the arms need a body. That body is a unified platform where humans and AI agents work together on the same data, with the same permissions, in the same workspace.

That’s the subject of the next paper in this series.

Zvi Schreiber has spent two decades building technology companies and is now focused on building software for the age of human-AI collaboration.